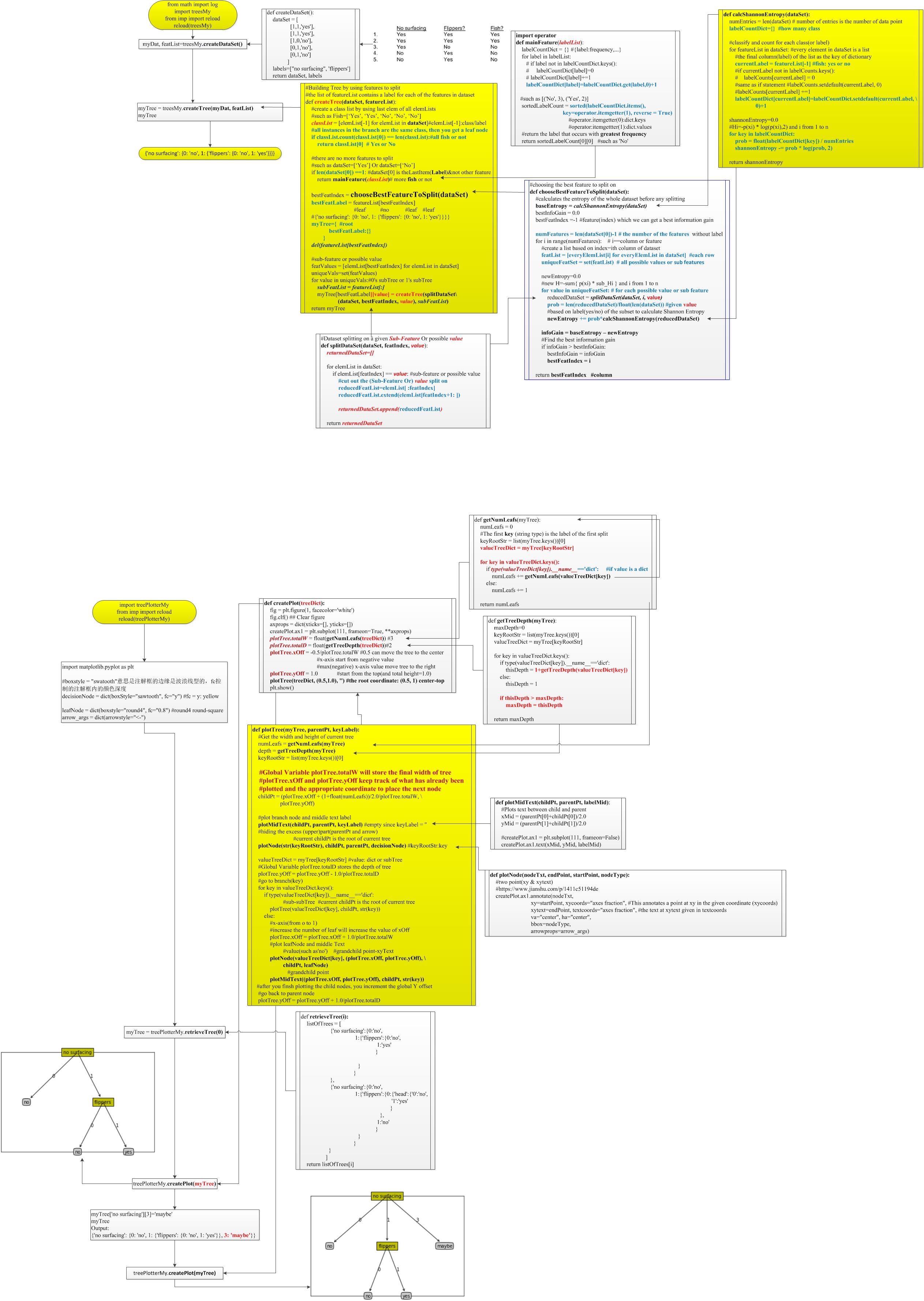

The complete process can be better understood using the below algorithm: It continues the process until it reaches the leaf node of the tree. This algorithm compares the values of root attribute with the record (real dataset) attribute and, based on the comparison, follows the branch and jumps to the next node.įor the next node, the algorithm again compares the attribute value with the other sub-nodes and move further. In a decision tree, for predicting the class of the given dataset, the algorithm starts from the root node of the tree. How does the Decision Tree algorithm Work?

Below diagram explains the general structure of a decision tree:.A decision tree simply asks a question, and based on the answer (Yes/No), it further split the tree into subtrees.In order to build a tree, we use the CART algorithm, which stands for Classification and Regression Tree algorithm.It is called a decision tree because, similar to a tree, it starts with the root node, which expands on further branches and constructs a tree-like structure.It is a graphical representation for getting all the possible solutions to a problem/decision based on given conditions.The decisions or the test are performed on the basis of features of the given dataset.Decision nodes are used to make any decision and have multiple branches, whereas Leaf nodes are the output of those decisions and do not contain any further branches. In a Decision tree, there are two nodes, which are the Decision Node and Leaf Node.

It is a tree-structured classifier, where internal nodes represent the features of a dataset, branches represent the decision rules and each leaf node represents the outcome. Decision Tree is a Supervised learning technique that can be used for both classification and Regression problems, but mostly it is preferred for solving Classification problems.Next → ← prev Decision Tree Classification Algorithm

0 kommentar(er)

0 kommentar(er)